Sharing knowledge with other TwinCAT developers using a blog is not only an easy and good way, but it’s also quite fun. After a long day at work, I often find it enjoying to sit down and write a little bit on a new blog post. Quite recently I launched TcUnit – the TwinCAT unit testing framework and even though there now is tons of documentation and example code on the official website, some people prefer to learn by watching a video. For this reason I’ve created a series of four videos that will introduce TwinCAT software developers to test driven development (TDD) and how to do TDD using TcUnit.

Sharing knowledge with other TwinCAT developers using a blog is not only an easy and good way, but it’s also quite fun. After a long day at work, I often find it enjoying to sit down and write a little bit on a new blog post. Quite recently I launched TcUnit – the TwinCAT unit testing framework and even though there now is tons of documentation and example code on the official website, some people prefer to learn by watching a video. For this reason I’ve created a series of four videos that will introduce TwinCAT software developers to test driven development (TDD) and how to do TDD using TcUnit.

TcUnit – A TwinCAT unit testing framework

I’m very happy to announce the release of TcUnit – an unit testing framework for TwinCAT3. TcUnit is an xUnit type of framework specifically done for Beckhoffs TwinCAT3 development environment. This is another step in the direction of modernizing the software development practices in the world of automation.

Before dwelling into the details, let me tell the background of this project. In 2016 the development of the CorPower wave energy converter (WEC) was in an intensive phase. Software was being finalized, tested and verified for delivery. In a late phase of the project some critical parts of the software needed to be changed. The changes could be isolated to a few function blocks (FB), so in an initial phase the tests simply consisted of exporting those FBs to a separate project and running them locally on the engineering PC. The FBs were changed and executed in the engineering environment, and then online-changing the inputs and seeing whether the expected outputs were given. After doing this for a couple of hours an important question was raised:

Shouldn’t this be automated?

CI/CD with TwinCAT – part four

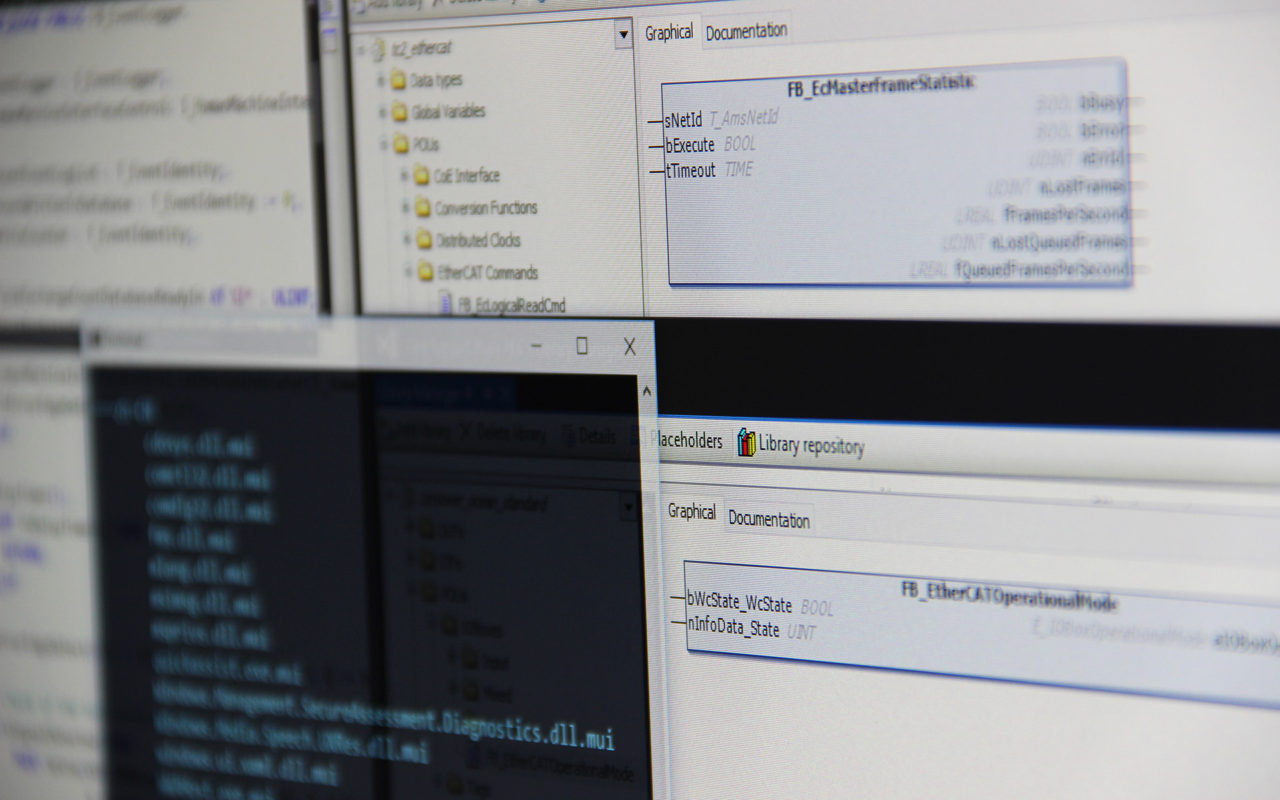

We are at the final post of this series of continous integration and delivery with TwinCAT. We’ve accomplished setting up & configuring the automation server, created a TwinCAT test library project and wrote a small batch script that will be launched every time a new push has been done to the GIT repository for the library project. Now it’s time for us to write the program that will do the actual static code analysis.

CI/CD with TwinCAT – part three

In the previous post we did some installation and configuration of software to support us in the automation of doing static code analysis of TwinCAT software. We have reached a state where a Jenkins job is launched as soon as our TwinCAT library project is pushed to the GIT-repository. Even though we have a Jenkins job defined for static code analysis of TwinCAT software, it’s not doing anything yet. This will be our next step.

Library categories

I like to have things structured and ordered. This does not only include the obvious stuff as making sure to pair and match all socks after laundry, but it also includes the TwinCAT software I develop, more specifically own developed TwinCAT libraries. “Whaaat??? What does sorting socks have to do with TwinCAT software?” you might wonder. I’m glad you asked.

I like to have things structured and ordered. This does not only include the obvious stuff as making sure to pair and match all socks after laundry, but it also includes the TwinCAT software I develop, more specifically own developed TwinCAT libraries. “Whaaat??? What does sorting socks have to do with TwinCAT software?” you might wonder. I’m glad you asked.

CI/CD with TwinCAT – part two

In the previous post we were introduced to the concept of continous integration and

continous delivery (CI/CD) in the world of PLC software development, more specifically TwinCAT development. The conclusion is that certain processes in the process of creating software can and should be automated. As an example, we set the goal of automating static code analysis of all TwinCAT software. In this part of the series of CI/CD we will look into some practical matters of installing and configuring the necessary software.

CI/CD with TwinCAT – part one

The development of PLC software needs to enter the 21st century. With the increased complexity of developed systems, the amount of software that is going to be developed for a given system will continue to increase. PLC development is partially living on an isolated island, and while most other areas of software development have been modernized, the world of PLC software engineering development is going significantly slower. When we develop more software, there is a need for more automation to remove unecessary manual handling and in the end increase the quality of the software we develop. Now I know that you can’t compare developing software for a web shop with developing software for a PLC that can run software that is critical and maybe even be safety-critical and one line of code can be the difference of life and death. However, developing reliable and robust software and adhering to modern software development processes and tools does not mean they do rule each other out. You can pick both.

TwinCAT & virtualization

Most people who have developed software have at some point or another used virtualization technology. Software development for PLCs in a virtual environment is often overlooked, since PLC development is so close to the hardware. Nevertheless, there are still advantages. Working for several projects with various requirements, but where a Beckhoff PLC/TwinCAT was the common delimiter, made me ask myself “How much use of virtualization can I do for TwinCAT software development?”

Mocking objects in TwinCAT

In my earlier posts I’ve written about development of TwinCAT software using test driven development (TDD), by writing unit tests. One of the advantages by adhering to the process of TDD is that you mostly will end up with function blocks (FBs) which have limited but well defined responsibility. Eventually you will however have FBs that are dependent on other function blocks. These could be FBs that are your own, or part of some 3rd party library, for example a Beckhoff library. Further, what if this external FB relies on some other functionality such as external communication using sockets that we have no control of? The external FBs should already be tested, we’re only interested in making sure our unit tests test our code! What do we do? A solution to this is to mock the external functionality and use dependency injection.

In my earlier posts I’ve written about development of TwinCAT software using test driven development (TDD), by writing unit tests. One of the advantages by adhering to the process of TDD is that you mostly will end up with function blocks (FBs) which have limited but well defined responsibility. Eventually you will however have FBs that are dependent on other function blocks. These could be FBs that are your own, or part of some 3rd party library, for example a Beckhoff library. Further, what if this external FB relies on some other functionality such as external communication using sockets that we have no control of? The external FBs should already be tested, we’re only interested in making sure our unit tests test our code! What do we do? A solution to this is to mock the external functionality and use dependency injection.

The wonders of ANY

While doing software development in TwinCAT, I have always been missing some sort of generic data type/container, to have some level of conformance to generic programming. “Generic programming… what’s that?”, you may ask. I like Ralf Hinze’s description of generic programming:

A generic program is one that the programmer writes once, but which works over many different data types.

I’ve been using generics in Ada and templates in C++, and many other languages have similar concepts. Why was there no such thing available in the world of TwinCAT/IEC 61131-3? For a long time there was a link to a type “ANY” in their data types section of TwinCAT3, but the only information available on the website was that the “ANY” type was not yet available. By coincidence I revisited their web page to check it out, and now a description is available! I think the documentation has done a good job describing the possibilities with the ANY-type, but I wanted to elaborate with this a little further.